Abstract

Despite their ubiquity, wireless earbuds remain audio-centric due to size and power constraints. We present VueBuds, the first camera-integrated wireless earbuds for egocentric vision, capable of operating within stringent power and form-factor limits. Each VueBud embeds a camera into a Sony WF-1000XM3 to stream visual data over Bluetooth to a host device for on-device vision language model (VLM) processing. We show analytically and empirically that while each camera's field of view is partially occluded by the face, the combined binocular perspective provides comprehensive forward coverage. By integrating VueBuds with VLMs, we build an end-to-end system for real-time scene understanding, translation, visual reasoning, and text reading; all from low-resolution monochrome cameras drawing under 5mW through on-demand activation. Through online and in-person user studies with 90 participants, we compare VueBuds against smart glasses across 17 visual question-answering tasks, and show that our system achieves response quality on par with Ray-Ban Meta. Our work establishes low-power camera-equipped earbuds as a compelling platform for visual intelligence, bringing rapidly advancing VLM capabilities to one of the most ubiquitous wearable form factors.

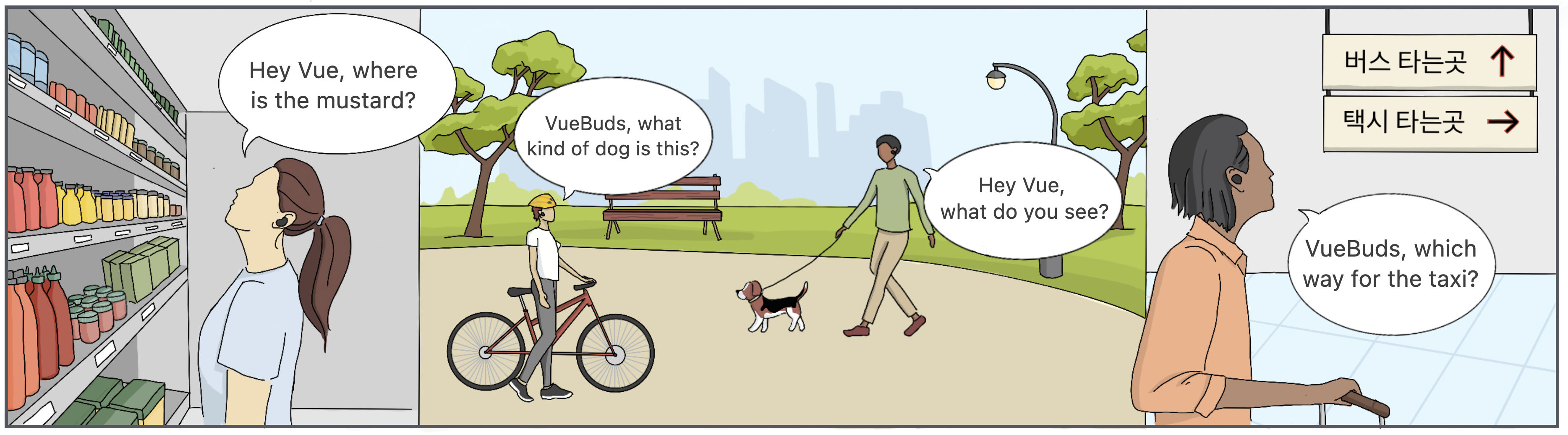

Applications of VueBuds. Our camera-integrated wireless earbuds enable natural language queries

for everyday visual tasks

such as locating items in a store, identifying objects, obtaining scene-level descriptions,

and interpreting foreign text for navigation.

VueBuds integrated with Sony wireless earbuds. The custom camera module (left) is powered directly

from the earbud battery,

with 3D-printed enclosures (middle) enabling forward-facing capture. VueBuds charge via the

original case (right).

Keywords: Visual Computing, Earables, Multimodal Interaction, Camera Earbuds

Contact: vuebuds@cs.washington.edu